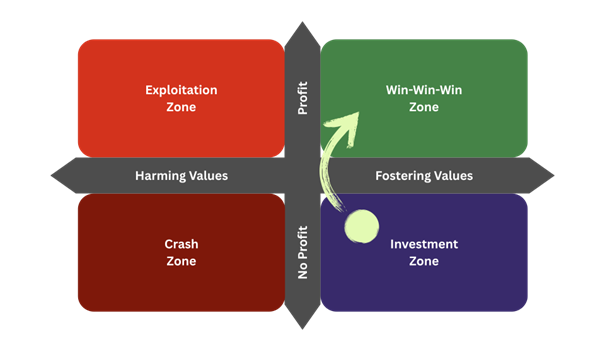

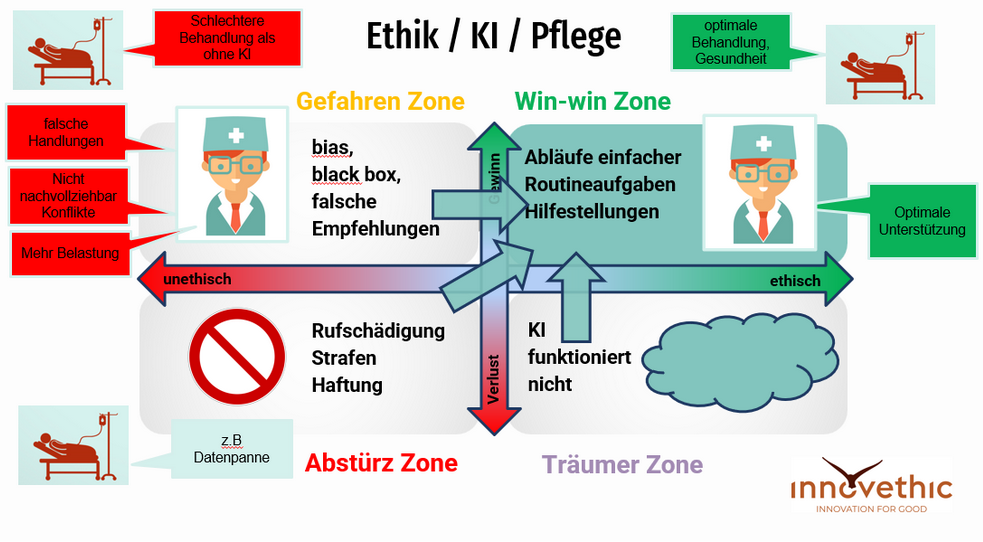

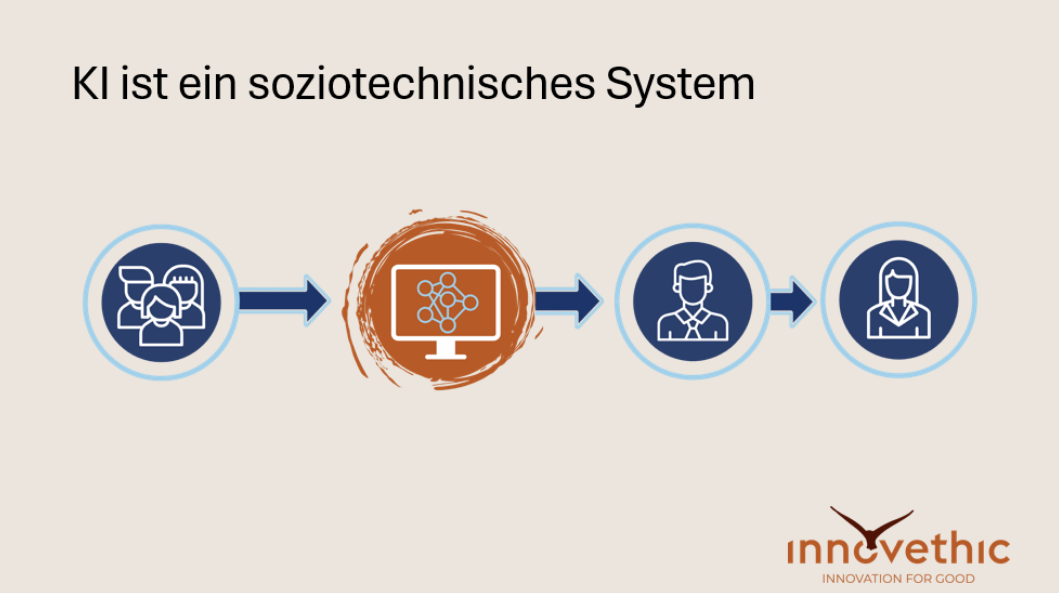

The discussion revolved around the tension between human intelligence and artificial intelligence (AI). Key questions included: Will machines soon be better than humans? What abilities will remain exclusive to humans? And how will AI change our self-image, our education, and our working world? The discussion covered whether we overestimate or underestimate AI, what ethical and regulatory challenges exist—for example, in connection with the AI Act—and how our trust in data-based systems is developing. The impact on schools, learning, and truth in the age of fake news and AI-generated art was also addressed. The discussion was broad in scope, covering philosophical, ethical, and social issues, and ultimately posed the question: What makes us human? The panel discussion featured: Dr. Robert König, philosopher and ethicist at the University of Vienna, science ambassador for the OEAD Mag. Lukas Madl, founder and CEO of innovethic, expert in responsible innovation and trustworthy AI Michael Volpert, founder of cup2gether, partner and advisor at structr Stefan Hupe, mentor in the Thinker Circle Students from the 8AB ethics class at BG Bachgasse The discussion was moderated by Mag.a Gabi Holzer

View details